This article was originally published by Tyler Durden at Zero Hedge

Nearly everything we buy, how we buy, and where we’re buying from is secretly fed into AI-powered verification services that help companies guard against credit-card and other forms of fraud, according to the Wall Street Journal.

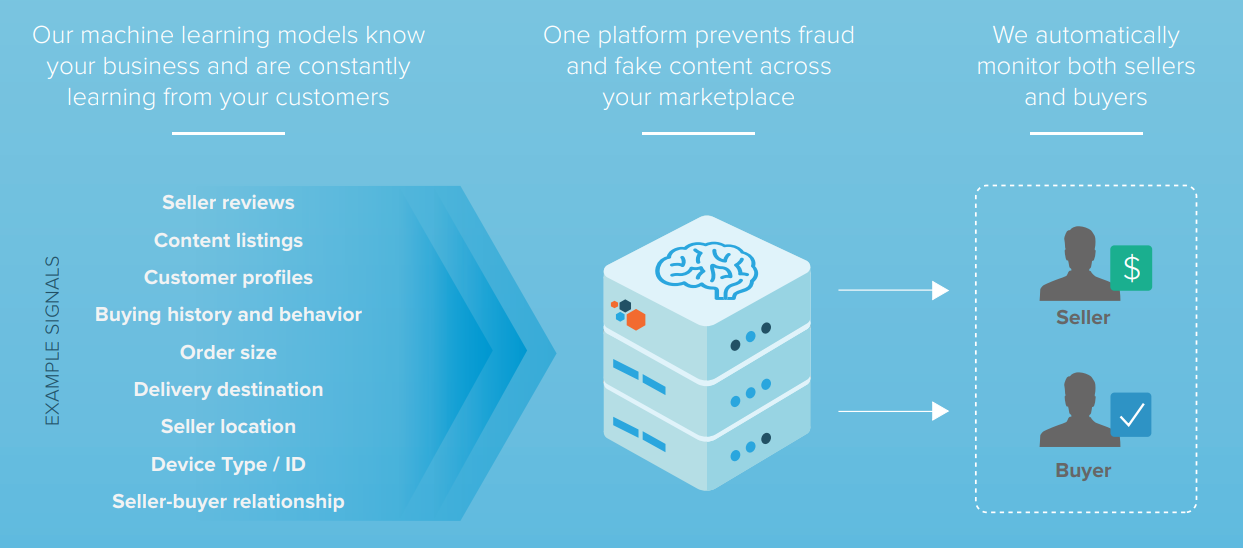

More than 16,000 signals are analyzed by a service called Sift, which generates a “Sift score” ranging from 1 – 100. The score is used to flag devices, credit cards and accounts that a vendor may want to block based on a person or entity’s overall “trustworthiness” score, according to a company spokeswoman.

From the Sift website: “Each time we get an event — be it a page view or an API event — we extract features related to those events and compute the Sift Score. These features are then weighed based on fraud we’ve seen both on your site and within our global network, and determine a user’s Score. There are features that can negatively impact a Score as well as ones which have a positive impact.”

The system is similar to a credit score – except there’s no way to find out your own Sift score.

Factors which contribute to one’s Sift score (per the WSJ):

Is the account new?

• Are there are a lot of digits at the end of an email address?

• Is the transaction coming from an IP address that’s unusual for your account?

• Is the transaction coming from a region where there are a lot of hackers, such as China, Russia or Eastern Europe?

• Is the transaction coming from an anonymization network?

• Is the transaction happening at an odd time of day?

• Has the credit card being used had chargebacks associated with it?

• Is the browser different from what you typically use?

• Is the device different from what you typically use?

• Is the cadence of the way you typed out your password typical for you? (tracked by some advanced systems)

Sources: Sift, SecureAuth, Patreon

The system is used by companies such as Airbnb, OpenTable, Instacart and LinkedIn.

Companies that use services like this often mention it in their privacy policies—see Airbnb’s here—but how many of us realize our account behaviors are being shared with companies we’ve never heard of, in the name of security? How much of the information one company shares with these fraud-detection services is used by other clients of that service? And why can’t we access any of this data ourselves, to update, correct or delete it?

According to Sift and competitors such as SecureAuth, which has a similar scoring system, this practice complies with regulations such as the European Union’s General Data Protection Regulation, which mandates that companies don’t store data that can be used to identify real human beings unless they give permission.

Unfortunately GDPR, which went into effect a year ago, has rules that are often vaguely worded, says Lisa Hawke, vice president of security and compliance at the legal tech startup Everlaw. All of this will have to get sorted out in court, she adds. –Wall Street Journal

In order to optimize scoring “Sift regularly evaluates the performance of our models and tries to minimize bias and variance in order to maximize accuracy,” according to a spokeswoman.

“While we don’t perform audits of our customers’ systems for bias, we enable the organizations that use our platform to have as much visibility as possible into the decision trees, models or data that were used to reach a decision,” according to SecureAuth Vice President and chief security architect Stephen Cox. “In some cases, we may not be fully aware of the means by which our services and products are being used within a customer’s environment.”

Not always right

While Sift and SecureAuth strive for accuracy, sometimes it’s difficult to decipher authentic purchasing behavior from fraud.

“Sometimes your best customers and your worst customers look the same,” said Jacqueline Hart, head of trust and safety at Patreon – a site used by artists and creators to allow benefactors to support them. “You can have someone come in and say I want to pledge $10,000 and they’re either a fraudster or an amazing patron of the arts,” Hart added.

If an account is rejected due to its Sift score, Patreon directs the benefactor to the company’s trust and safety team. “It’s an important way for us to find out if there are any false positives from the Sift score and reinstate the account if it shouldn’t have been flagged as high risk,” said Hart.

There are many potential tells that a transaction is fishy. “The amazing thing to me is when someone fails to log in effectively, you know it’s a real person,” says Ms. Hart. The bots log in perfectly every time. Email addresses with a lot of numbers at the end and brand new accounts are also more likely to be fraudulent, as are logins coming from anonymity networks such as Tor.

These services also learn from every transaction across their entire system, and compare data from multiple clients. For instance, if an account or mobile device has been associated with fraud at, say, Instacart, that could mark it as risky for another company, say Wayfair—even if the credit card being used seems legitimate, says a Sift spokeswoman. –Wall Street Journal

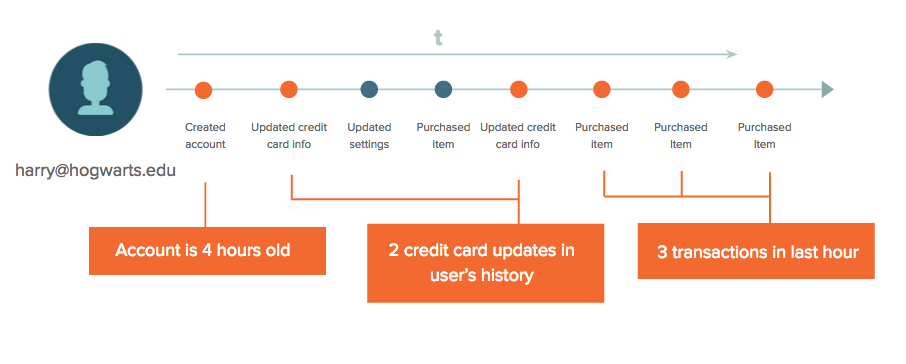

A person’s Sift score is constantly changing based on that user’s behavior, and any new information the system gathers about them, according to the spokeswoman. From Sift:

We learn in real-time, which means Scores are constantly being recalculated based on new knowledge of fraudulent users and patterns. For example, when someone logs in, we’ve found out a lot of information in the meantime about suspicious devices, IP addresses, shipping addresses, etc., based on the activity of other users. Add this to the fact that there may have been some new labeled users since their last login, and the scores can sometimes have a significant change. This is also more likely if the user hasn’t had much activity on your site. –Sift.com

While Sift judges whether or not one can be trusted, there’s no file with your name on it that it can produce for review – because it doesn’t need your name to analyze your behavior, according to the report – which seems like total BS.

“Our customers will send us events like ‘account created,’ ‘profile photo uploaded,’ ‘someone sent a message,’ ‘review written,’ ‘an item was added to shopping cart,” says Sift CEO Jason Tan.

It’s technically possible to make user data difficult or impossible to link to a real person. Apple and others say they take steps to prevent such “de-anonymizing.” Sift doesn’t use those techniques. And an individual’s name can be among the characteristics its customers share with it in order to determine the riskiness of a transaction.

In the gap between who is taking responsibility for user data—Sift or its clients—there appears to be ample room for the kind of slip-ups that could run afoul of privacy laws. Without an audit of such a system it’s impossible to know. Companies live under increasing threat of prosecution, but as just-released research on biases in Facebook ’s advertising algorithm suggest, even the most sophisticated operators don’t seem to be fully aware of how their systems are behaving. –Wall Street Journal

“I would argue that in our desire to protect privacy, we have to be careful, because are we going to make it impossible for the good guys to perform the necessary function of security?” asks Anshu Sharma – co-founder of Clearedin, a startup which helps companies avoid falling victim to email phishing attacks.

His solution? Transparency. When a company rejects a potential customer based on their Sift score, for example, it should explain why – even if that exposes how the scoring system works.

0 Comments